Is it possible to generate an episode in the style of an established comedy podcast using various AI tools and produce a result that sounds as authentic as possible? Driven by this question, the podcast project was born: a collaboration between the entertainment podcast DAS PODCAST UFO and a team from the RHET AI Center at the University of Tübingen.

The project

The emergence of generative AI tools presents new challenges to our society: when we engage with media content and news, the increasing presence of AI-generated content constantly confronts us with questions about the authenticity of the images, videos, audio clips, and voices we encounter online, as well as the intended purpose of this AI-generated content. Where does AI-generated content simulate alternative realities that we do not immediately perceive as such? What content can we still trust and how do we navigate this changed media landscape safely and with awareness? Our project is rooted in the tension between these questions and challenges. As a research center for rhetorical science communication on artificial intelligence, we see it as our responsibility to find answers to these questions in dialogue with society and to provide guidance in the surrounding discourse.

In 2024, we were contacted by the hosts of DAS PODCAST UFO about a collaboration: together, they wanted to create an episode generated entirely by artificial intelligence in their own podcasting style. From a science communication perspective, this was a very exciting project for us: The project aims to explore the limits and possibilities of creative AI generation and co-creative interaction between humans and AI tools, and to further stimulate the discourse surrounding the use of generative AI: Where does the use of generative AI tools make sense, and where does it not? How can consumers recognize AI-generated content? What does responsible use of generative AI look like? How are viewing, listening, and reading habits changing due to these technologies? These are all questions that we as a society must ask ourselves, discuss publicly, and research scientifically.

At the RHET AI Center, we see this project as a unique opportunity to research the field of co-creativity and the aesthetics of impact in AI-generated media content across all stages of production and distribution, and to test the viability of rhetorical strategies when dealing with generative AI. At the same time, this project aims to raise public awareness of the social dimensions of AI-generated content and to foster open discussion on these issues. In particular, we are deeply committed to the topic of competent and responsible use of AI and AI tools, the so-called AI literacy. The podcast project offers an opportunity to explore this topic in a non-academic, entertainment-oriented context together with the hosts and the PUFO community. On Monday, March 16, 2026, listeners of DAS PODCAST UFO had the opportunity to ask the researchers questions about the project and the research during an "Ask Me Anything" session hosted on the podcast’s own Reddit page.

We are happy to have the entertainment podcast DAS PODCAST UFO and its two hosts, Stefan Titze and Florentin Will, as project partners by our side, with whom we can conduct this social experiment and thus perform important pioneering work in the German-language AI discourse.

Goal and structure of the research projekt

The goal of the research project was to create a podcast episode of PODCAST UFO that sounded as authentic as possible using various AI tools. Another podcast episode, in which the project is analyzed, contextualized, and explained, was part of the design from the very beginning.

The entire process of the project is of interest to our work at the RHET AI center. Which criteria does an AI-generated episode need to meet to sound authentic? How can we train generative AI and design a strategic prompting process so that the output is convincing and authentic? How much human influence does this process require, and at which points is this influence particularly necessary? How does an episode like this affect listeners? Where does the illusion break? How do listeners react to the episode after being informed of its AI-generated nature, and what discourse does the project spark? These are some of the questions that arose during the course of the project and which we intend to address in our research.

The work on the project began in January 2025 and proceeded in small steps until the release of the AI-generated episode on March 11, 2026, the scientific observation of the following discourse, and the release of the explanation episode on March 12, 2026.

AI tools used

During the production of the podcast episode, our goal was to generate as many ideas, as much text, and as much audio material as possible with AI tools. To achieve this, we divided the work in four parts and selected a suitable AI tool for each of these steps. We began by transcribing the podcast's audio content to create a text database, which we then used to train another AI tool to generate the episode's text. Finally, we used a third AI tool to generate the audio content for the episode. In addition, a fourth AI tool was used to create a listener's style intro typical of the podcast.

- WhisperX is an AI-powered automatic speech recognition (ASR) tool based on a neural network. The tool enables users to quickly and accurately transcribe large amounts of audio material into text.

- In our project, we used WhisperX to transcribe 50 episodes of the podcast DAS PODCAST UFO. We needed the transcribed podcast episodes as a data set for the AI tool, which we used for text generation.

- ChatGPT is a generative AI tool developed by OpenAI that is based on a so-called Large Language Model (LLM) and operates using a Transformer architecture and reinforcement learning. It functions as a chatbot and is trained to generate responses in natural language.

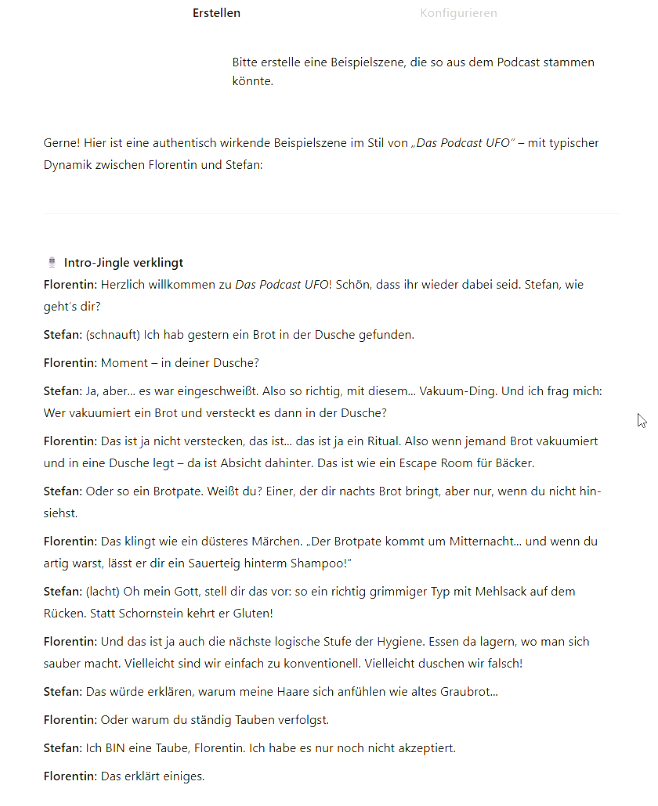

- To generate the text for the episode, we created a so-called CustomGPT. This is a specific ChatGPT model that, in addition to ChatGPT's basic training and general operating instructions, is supplemented with custom prompts, databases, and specifications, allowing it to be trained for specific tasks.

In our case, we created a base prompt for the CustomGPT, clearly specifying the purpose of the generated text segments and the data to be taken into account. In addition, we provided the CustomGPT with an extensive knowledge base. This included not only episode transcripts but also analyses of the podcast's composition, structure, style, and language, as well as features specific to spoken language (such as breaks within a sentence, filler words, repetitions, and more).

Through a detailed and long process, we gradually generated the podcast episode text step by step. This involved multiple feedback loops within the project team, with the resulting input integrated into the generation process with ChatGPT.

Overall, we worked on the generation over a period of approximately eight months. The models used included GPT 4.5–5.1. To create the CustomGPT, we used a paid Plus-version of ChatGPT.

- ElevenLabs is a provider of various AI-based audio tools and specializes in the generation of authentic-sounding voices. The tool is based on complex deep learning models trained to recognize how humans naturally speak and to reproduce these patterns. To achieve this, ElevenLabs employs multiple layers of neural networks, as well as generative adversarial networks (GANs), and a specific transformer architecture.

- In our project, we used ElevenLabs as a tool for generating the audio of the podcast episode. For this purpose, we relied on the Instant Voice Cloning feature, which we trained using audio material from the two podcast hosts in order to obtain authentic-sounding voice clones. These voice clones were then used within the tool's text-to-speech function to vocalize the generated text segments of the episode. Here, too, an extensive feedback process and a highly detailed workflow were necessary to achieve satisfying results with the tool.

- In our project, we used versions V2, V3-alpha, and V3 of ElevenLabs' text-to-speech tool. We worked with the Creator subscription plan.

- Udio is a generative AI tool trained for music generation. Users can generate a piece of music via a prompt, specifying elements such as genre, storyline, themes, lyrics, and more.

- In our project, we used Udio to generate an intro for the podcast. Traditionally, PUFO listeners submit self-created intros to DAS PODCAST UFO, which are then used for each new episode. In order to meet our aim of maximizing the use of AI, we used Udio to generate such an intro.

The creation process

Work on the project and the creation of the final product "Experiment" took place in close collaboration with our project partners from DAS PODCAST UFO and unfolded in three phases. During each of these phases, we continuously evaluated our results with Stefan Titze and Florentin Will and adjusted them based on their input.

Our goal was to generate a podcast episode using AI tools that would sound as authentic as possible. To achieve this, it was first necessary to analyze the original podcast and determine how an AI-generated episode would need to be structured in order to meet this objective.

In our analysis, we examined various levels of the podcast:

- overall structure, selection of topics, recurring elements and the arrangement of content

- humor structure, comedic elements and the structure of thematic bits

- speaker dynamics, turn-taking and narrative style

- language and form, stylistic devices and speaker-specific characteristics

- voice and manner of speaking, modulation and dialect

To carry out the analysis, we listened to a large number of episodes of DAS PODCAST UFO. In addition, we used the AI tool WhisperX to transcribe 50 episodes of the podcast. These transcripts, along with the results of our analysis, formed the training basis for the CustomGPT used for text generation.

At the same time, we trained the AI tool ElevenLabs using audio sequences from the podcast and created voice clones for the two hosts.

However, the processes of analysis and training were not completed prior to the start of the generation phase. Rather, throughout the generation process, we repeatedly identified areas in which the AI tools required further training based on additional material. For example, our analysis of humor structure was only initiated during the course of the generation process.

In the second phase of the project, we began generating the text for the episode. To this end, we created a CustomGPT with a base prompt and used the AI tool to develop potential topics for the episode.

Based on these topics, we let the AI generate dialogues. These were then refined in an intensive editing process within the team and together with our project partners, adjusting them in a highly detailed manner using the CustomGPT until satisfactory results were achieved. The process lasted from April to December 2025 and required many hours of work. We worked on individual bits (thematic segments) which were subsequently combined.

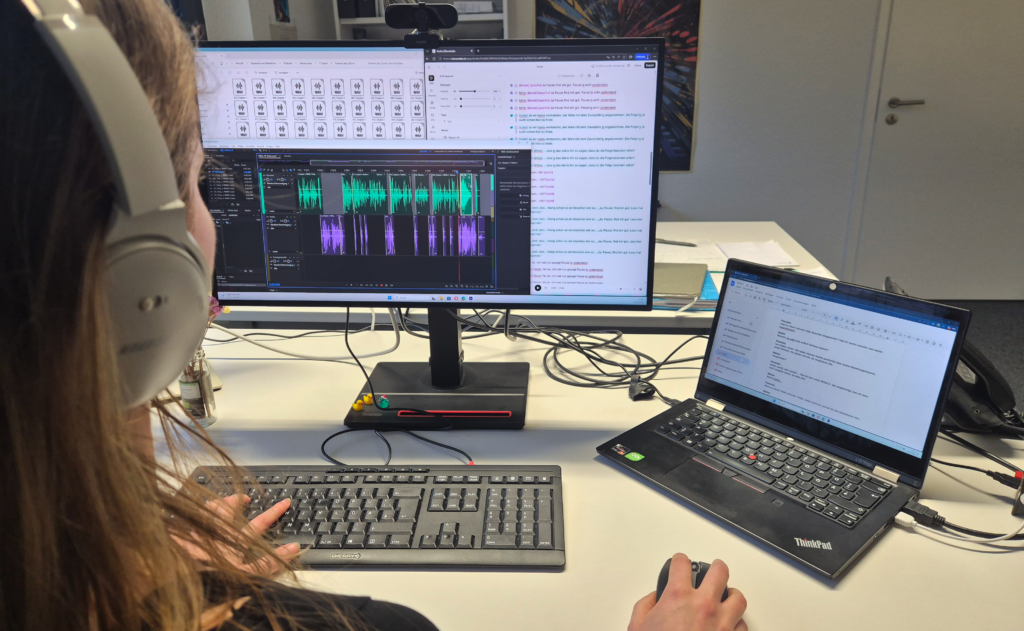

At the same time, beginning in May, we started to generate audio for individual bits. The audio generation process was likewise carried out in fine-grained steps: first, the bit texts were set up as audio projects in ElevenLabs, and based on these texts, the individual conversational turns were synthesized one after another.

This was complemented by the adjustment of subtle aspects of speech delivery, such as the nonverbal expression of emotions (e.g. through changes in pitch, speech rate, or pausing). These were partly inferred by the system from the wording and punctuation of the input turns, and partly guided by stylistic prompts embedded in the speech text, indicated in square brackets "[…]". Since the outputs from ElevenLabs varied considerably in terms of vocal baseline and audio quality (for instance, some files contained noticeable interference), multiple versions of each utterance were generated. Tonally matching segments were then selected and edited together in Adobe Audition.

In a final revision step, conversational particles typical for everyday speech (e.g. "hm", "ja", "okay", "mhm") were generated and inserted at appropriate points within the Audition sessions. The majority of the audio generation work took place between January and March 2026.

In the third phase of the project, we focused on preparing the publication and monitoring the ensuing discourse. Planning for this phase had been ongoing since the beginning of the project, as we recognized early on both the potential for science communication and the significant research interest inherent in the project.

We clearly defined our underlying motivation, as well as the questions and the type of discourse we aimed to initiate. We closely followed the prevailing public discourse on AI and identified specific areas in which we sought to provide new impulses. From the outset, the "reveal" episode of March 12, 2026, was conceived as an essential component of the project and as an opportunity to introduce these impulses in a publicly visible way.

We continue to observe the discourse surrounding both the release of the episode and the reveal episode. In addition, we are working on an academic evaluation of the project. Initial publications are already available, with further outputs currently in preparation.

The podcast episodes (in german)

- AI-generated episode: Experiment

- Resolution/Behind-the-scences episode: UFO506 KI-Experiment Auflösung

Information about the podcast

DAS PODCAST UFO is a comedy podcast that has been succesfully running since 2014. The two hosts, Stefan Titze and Florentin Will, discuss everyday life and the absurd, pop culture, media, and personal observations on a weekly basis. The podcast is known for its improvised segments and a soft spot for the surreal, blending humor and creativity. More information about the podcast is available on the website and the "Pufopedia" (both in german).

Stefan Titze is a screenwriter, producer, and comedian. He was part of the writing team for Neo Magazin Royale and is a co-creator and writer of the multi-award-winning Netflix series How to Sell Drugs Online (Fast). In addition to his work as co-host of DAS PODCAST UFO he is active in various television and streaming productions.

Florentin Will is an actor, comedian and host. He has worked both in front of and behind the camera for Neo Magazin Royale and has appeared in numerous comedy and entertainment shows for television and digital platforms. In addition to co-hosting DAS PODCAST UFO he works as a host and creative at Rocket Beans TV.

Outside of DAS PODCAST UFO Florentin Will (left) and Stefan Titze (right) also regularly perform together on stage as improv comedy artists. (CC: Joseph Strauch)

Academic publications

Köhler, Anna; Volz, Carolin; Gottschling, Markus (2025): "Wie echt klingt KI?" In: Bieck, Julia; Stavesand, Meena: 10 Jahre HOOU. 10 Stimmen zum Podcasting. S. 59–69. Available online (in german): Digital-HOOU-Podcasts-01-Druckbogen.pdf.

Köhler, Anna; Volz, Carolin (2026): "(Re-)Creating a comedy podcast using Generative AI." Presented at the Symposium Teaming Up with Generative AI: From Tool Use to Partnership. Deutsches Hygiene-Museum Dresden, Germany. March 25 — 26, 2026.

Volz, Carolin (forthcoming): Simulation and Authenticity — Rhetorical Strategies within the AI generated Podcast (working title). Masterthesis in the dregree program Allgemeine Rhetorik (general rhetoric).

Projectlead and contact:

Anna Köhler (01/25 — 03/26)

Project coordinator and Co-Lead

Audio Production; Audio Editing; Organization; Research; Text Generation; Scientific Supervision

Contact: anna-marie.koehler@uni-tuebingen.de

Carolin Volz (01/25 — 03/26)

Co-Lead

Organization; Research; Editing; Text Generation; Scientific Supervision

Contact: carolin.volz@student.uni-tuebingen.de

Vladimir Jakimenko (02/25 — 05/25)

Audio Production; Audio Editing; Research

Alina Habermann (06/25 — 08/25)

Audio Editing

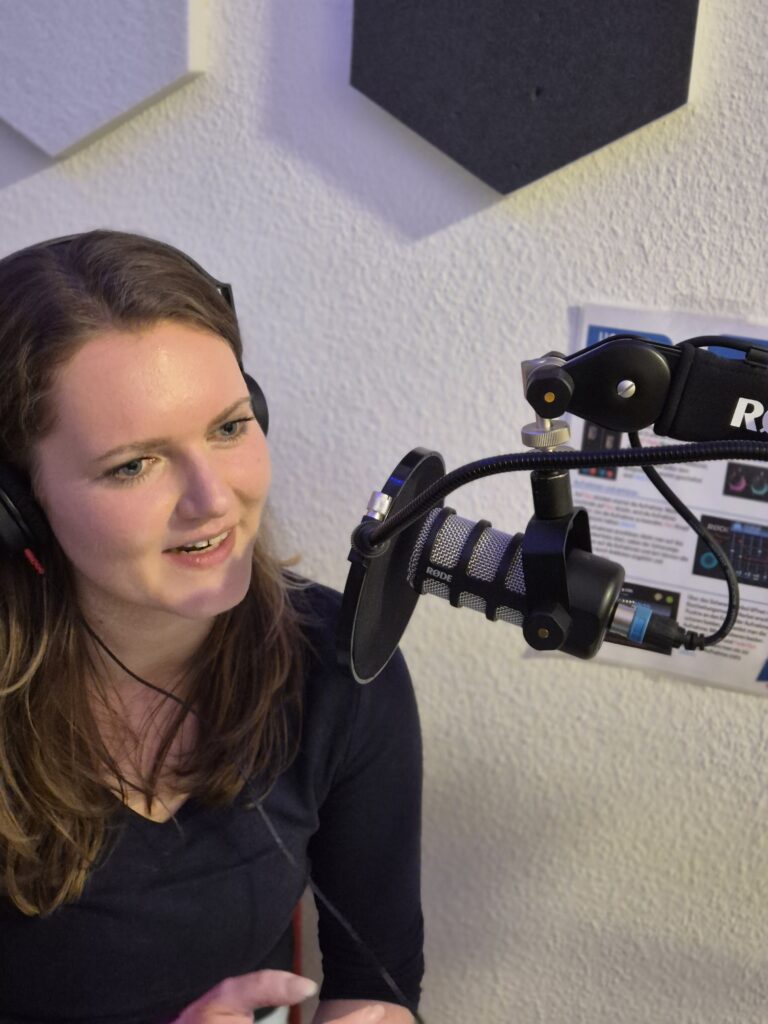

Impressions from the projekt

A final Thank You

Huge thanks go to Stefan Titze and Florentin Will from DAS PODCAST UFO for giving us the opportunity to collaborate on this project and for providing us not only with their data and voices but also with the platform to contribute to this important social discourse.

We would also like to thank the KI-Makerspace in Tübingen for providing us with space and equipment to record the resolution episode.